|

* All writes to ctx->count occur within ctx->wqh.lock. } static _poll_t eventfd_poll( struct file *file, poll_table *wait) Struct eventfd_ctx *ctx = file-> private_data Static int eventfd_release( struct inode *inode, struct file *file) */ void eventfd_ctx_put( struct eventfd_ctx *ctx) * with eventfd_ctx_fdget() or eventfd_ctx_fileget(). * The eventfd context reference must have been previously acquired either * eventfd_ctx_put - Releases a reference to the internal eventfd context. Struct eventfd_ctx *ctx = container_of(kref, struct eventfd_ctx, kref) } static void eventfd_free( struct kref *kref)

Ida_simple_remove(&eventfd_ida, ctx->id) Static void eventfd_free_ctx( struct eventfd_ctx *ctx) */ _u64 eventfd_signal( struct eventfd_ctx *ctx, _u64 n) * Returns the amount by which the counter was incremented. * value, and we signal this as overflow condition by returning a EPOLLERR In this function we allow the counter to reach the ULLONG_MAX * This function is supposed to be called by the kernel in paths that do not * Value of the counter to be added to the eventfd internal counter. * eventfd_signal - Adds to the eventfd counter. Wake_up_locked_poll(&ctx->wqh, EPOLLIN | mask) Spin_lock_irqsave(&ctx->wqh.lock, flags) */ if ( WARN_ON_ONCE( current->in_eventfd)) * it returns false, the eventfd_signal() call should be deferred to a * check eventfd_signal_allowed() before calling this function. * nested waitqueues with custom wakeup handlers, then it should * Deadlock or stack overflow issues can happen if we recurse here

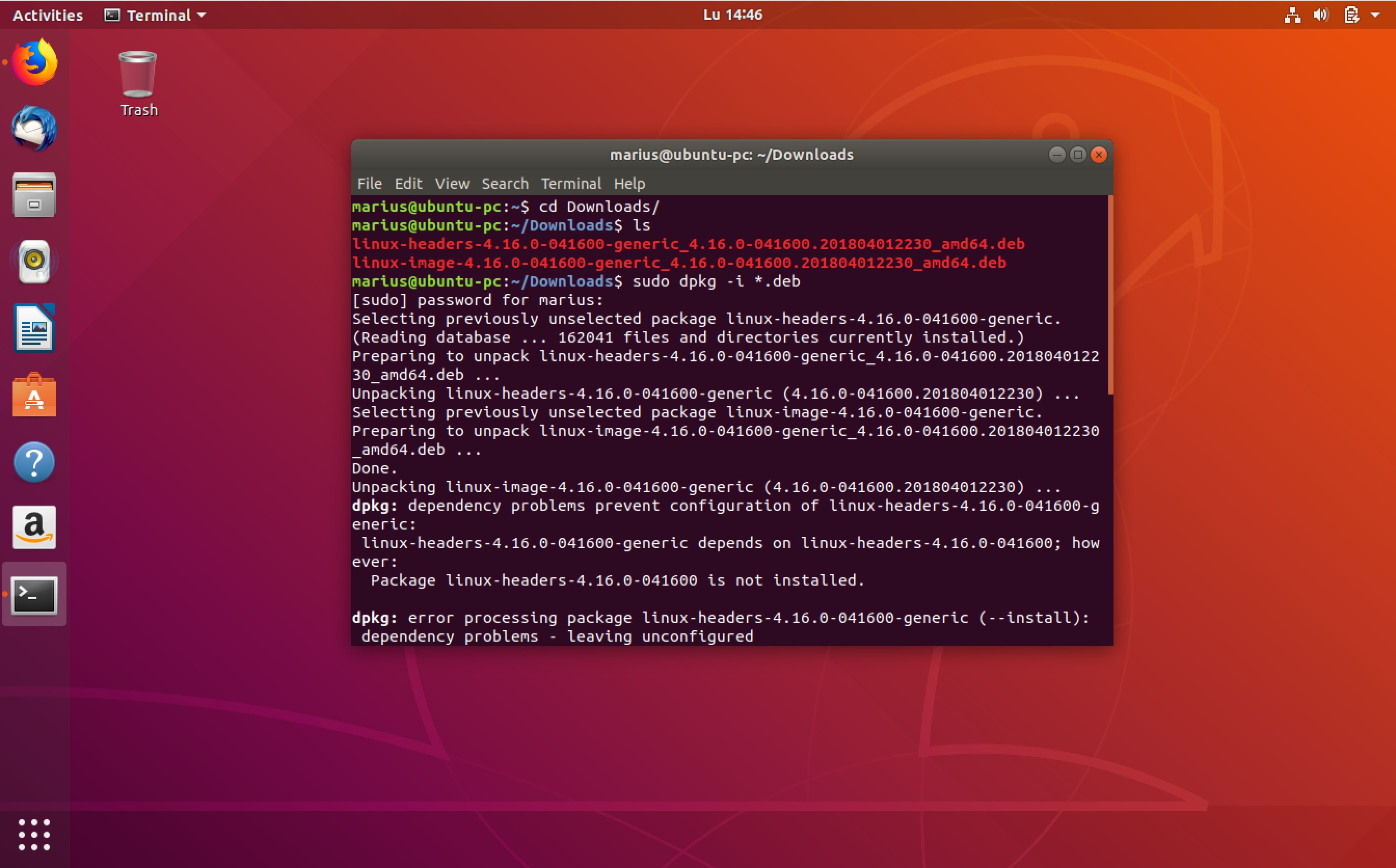

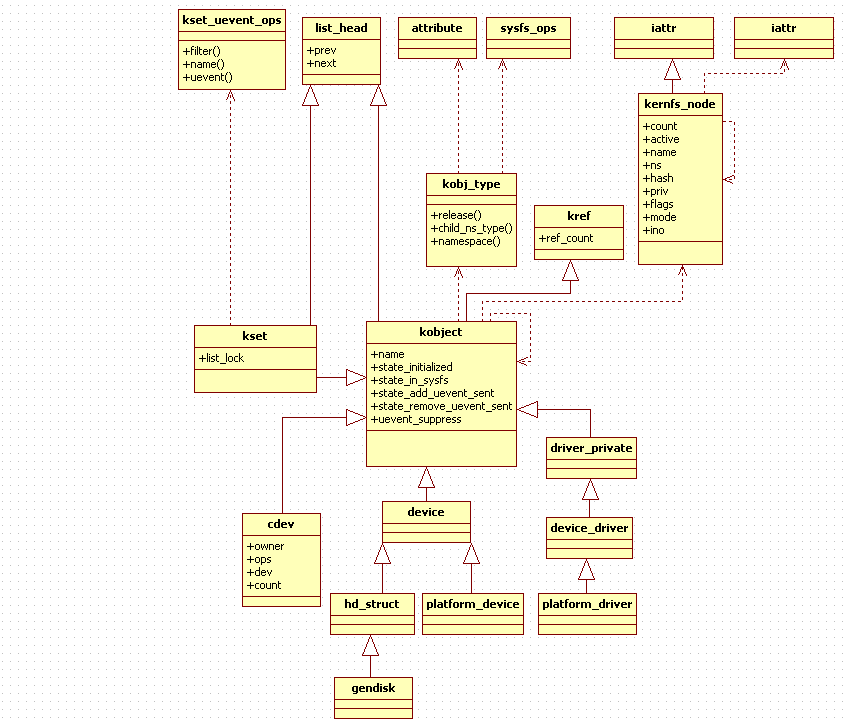

_u64 eventfd_signal_mask( struct eventfd_ctx *ctx, _u64 n, _poll_t mask) * also, adds to the "count" counter and issue a wakeup. * specified, a read(2) will return the "count" value to userspace, * value of the _u64 being written is added to "count" and a * Every time that a write(2) is performed on an eventfd, the */ #include #include #include #include #include #include #include #include #include #include #include #include #include #include #include #include #include #include static DEFINE_IDA(eventfd_ida) Previous message: Randy Dunlap: "Re: linux-next: Tree for Nov 14 (drivers/cpufreq/intel_pstate.// SPDX-License-Identifier: GPL-2.0-only /*.+bool refcount_dec_and_lock(refcount_t *r, spinlock_t *lock) This allows free() while holding the lock. + * Similar to atomic_dec_and_lock(), it will BUG on underflow and fail +bool refcount_dec_and_mutex_lock(refcount_t *r, struct mutex *lock)

This allows free() while holding the mutex. + * to decrement when saturated at UINT_MAX. + * Similar to atomic_dec_and_mutex_lock(), it will BUG on underflow and fail + old = atomic_cmpxchg_release(&r->refs, val, new) +bool refcount_dec_and_test(refcount_t *r) + * Provides release memory ordering, such that prior loads and stores are done + * decrement when saturated at UINT_MAX. + * Similar to atomic_dec_and_test(), it will BUG on underflow and fail to +bool refcount_inc_not_zero(refcount_t *r) + * object memory to be stable (RCU, etc.). + * Provides no memory ordering, it is assumed the caller has guaranteed the + * Similar to atomic_inc_not_zero(), will BUG on overflow and saturate at UINT_MAX. + unsigned int old, new, val = atomic_read(&r->refs) +static inline void refcount_inc(refcount_t *r) + * reference on the object, will WARN when this is not so. + * Provides no memory ordering, it is assumed the caller already has a + * Similar to atomic_inc(), will BUG on overflow and saturate at UINT_MAX. +static inline unsigned int refcount_read(const refcount_t *r) +static inline void refcount_set(refcount_t *r, int n) Semantics such that when it overflows, we'll never attempt to free it It provides overflow and underflow checks as well as saturation Provide refcount_t, an atomic_t like primitive built just for Next in thread: Ingo Molnar: "Re: kref: Implement using refcount_t".In reply to: Kees Cook: "Re: kref: Add kref_read()".Previous message: Randy Dunlap: "Re: linux-next: Tree for Nov 14 (drivers/cpufreq/intel_pstate.c)".Next message: Paolo Bonzini: " KVM: x86: do not go through vcpu in _get_kvmclock_ns".

Linux-Kernel Archive: kref: Implement using refcount_t kref: Implement using refcount_t From: Peter Zijlstra

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed